Unifying data across McDonald’s in the Nordics

McDonald’s Nordics operates in four markets (Sweden, Denmark, Norway, and Finland) with over 400 restaurants. When McDonald’s Nordics was purchased by the master franchise Food Folk, a new need arose: to report on all Nordic franchisees to both Food Folk and McDonald’s Global.

However, each market had its own IT department and distinct data warehouse solution:

“Denmark had a data warehouse alongside Tableau for reporting, Sweden was using an Oracle database with homemade applications, and so on. Everyone had their own solution,” said Cristian Ivanoff, Data Engineer at Food Folk. “We needed to create one platform, one data warehouse, for all countries.”

Vast amounts of data—from sales to products

McDonald’s collects granular sales data from all orders in their franchises, from in-person and online orders to orders from third-party sources such as Uber Eats. The data includes receipt-level detail, down to the amount of time required to serve each burger.

They use this data to improve performance across the business—for example, by tracking and fine-tuning the amount of time to fulfill a drive-through order, starting from the customer's approach to the order window.

In addition to order and transaction data, the company also collects:

- financial data for accounting, reporting to the board, and optimizing each franchise location.

- operational data such as product and menu information, which allows the company to account for regional differences (such as distinct product lines and ingredients by country) in analytics downstream.

Creating a centralized data stack with Snowflake, Fivetran, and dbt Cloud on Data Vault 2.0

Assessing and selecting vendors

Since each market had its own solution, it was up to the parent organization to define what the new centralized data stack would look like.

Cristian and the data engineering team began by assessing the company's data use cases. “We started to look at our outputs and tracked backward to all our existing tools and vendors,” he said. After evaluating capabilities and making a short list, they selected Snowflake for storage and Fivetran for data loading. “And, because I believe in an SQL-first approach, I wanted to use dbt,” added Cristian.

Why they chose dbt Cloud

McDonald’s Nordics initially implemented dbt Core, but upgraded to dbt Cloud to take advantage of its built-in scheduler and data lineage. Besides the appeal of these dbt Cloud capabilities, they found it practical to keep data transformations decoupled from other parts of the stack.

“We needed these features, but we also needed to be more independent from the other tools we picked for our new stack, such as our new data warehouse and data visualization tool,” said Cristian. “We don’t know if we’ll keep these products forever, so we wanted to separate our models with dbt Cloud.”

Why they chose Data Vault 2.0

The data engineering team initially used a traditional Kimball structure to organize their data but ran into challenges soon after rollout. Every developer had a slightly different approach to formatting data or handling slowly-changing dimensions (SCDs).

“The data started getting messy, with strange transformations,” said Cristian. The variability in their data development code led to different versions of the same dimensions.

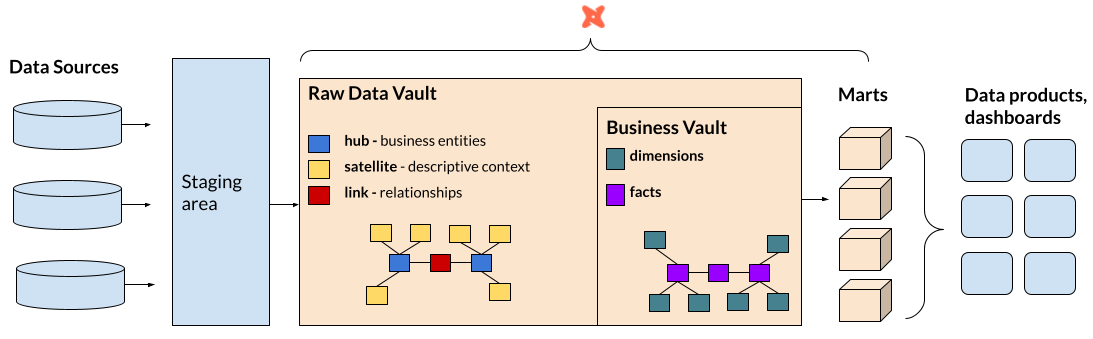

At that point, the team decided to explore Data Vault 2.0, a data modeling technique used to organize data from many source systems in a data warehouse. With Data Vault, data is separated into:

- Hubs: business entities / keys

- Satellites: descriptive context

- Links: relationships between hubs

This separation of concerns keeps data organized at the ingestion point, to make downstream data modeling easier.

Like many teams using the Data Vault 2.0 approach, Cristian's team built data marts (dimensional models or star schemas) on top of their Data Vault models, to prepare the information for use in downstream applications and BI tools.

“We needed to have rules to coordinate the slowly-changing dimensions, and to do it in such a way where we could still reuse all transformations easily. We required a standard and that’s what Data Vault gave us,” Cristian explained.

Accelerating Data Vault Implementation in dbt Cloud

To streamline their initial setup, the McDonald's Nordics team used AutomateDV to generate pre-built, Data Vault-compliant models and macros in their warehouse. This sped up their implementation and allowed Cristian and his team to focus on refining business logic.

“We didn’t need to do a lot of investigation or setting new rules. The AutomateDV package handles the technical parts, with macros and materialization macros, creating the SQL,” explained Cristian. “We don’t need to think too much about the technical stuff and can instead focus on modeling our business concepts.''

“What we do with Data Vault wouldn’t be possible without dbt,” he shared. “I’ve done Data Vault with Matillion and Dataform, but in both cases, it required significant amounts of work even just to get started. We had to create all the packages and macros ourselves from scratch.”

Reaping the benefits of a new data structure

Access to historical data even when not previously in-scope

With the Data Vault structure, McDonald’s Nordics could now access all historical changes made to a dimension.

When the platform team built the restaurants’ menus’ views, the original brief from stakeholders detailed they’d only need to report on the latest version of each menu. However, once the report was delivered, the requirements changed. Stakeholders requested they’d also like to access how menus had changed over time. By visualizing which items were added and removed, they could analyze how they affected operations and sales.

“With the previous structure, we’d have to make many changes in the dimensions to get this data,” said Cristian. “But because we had all the historical changes and links in the Data Vault, we could quickly implement the changes. It was very satisfying how little time it took to deploy the new code.”

Easier troubleshooting and higher trust from business users

As well as delivering new data requests faster, there’s a secondary advantage to the Data Vault structure: data traceability. And, alongside dbt’s data lineage, McDonald’s Nordics can connect all the dots from raw data to reporting views.

“I can see when I got the record, and I can trace the record back to the raw file or raw table created by Fivetran,” said Cristian. “With data lineage, I can also see which views were affected. This makes troubleshooting a lot easier and gives us a more robust data warehouse.”

Power BI developers servicing business users

Although traceability is not directly visible to business users, the robust data warehouse it creates leads to faster delivery times, better governance, and more trustworthy data.

“People don’t care how they get the data, just that they have the correct, quality data,” said Cristian. “With dbt, we ensure this is the case.”

Based on business logic and views delivered by the data platform team, their analytics team—including 5 Power BI developers—can create reports and dashboards.

These developers have their own schema in Snowflake and can use it to access the dimensional and fact tables. Business users can go straight to the Power BI team whenever they have data questions or report requests.

Moving forward: democratization and process

Currently, Power BI developers aren’t exposed to the raw data that is in the “hubs,” “links,” or “satellites.” This leads analysts to request guidance from the platform team. A new process, where BI developers can view and understand all the assets in the Data Vault, is coming soon.

To increase the democratization of the new data stack, McDonald’s Nordics also has a project to train all business analysts and other business users on creating their own reports. By furthering who gets to access and model the data—while maintaining a governed standard—the team can further increase the velocity of data insights.